A decide in Washington state has blocked video proof that’s been “AI-enhanced” from being submitted in a triple homicide trial. And that’s a great factor, given the truth that too many individuals appear to suppose making use of an AI filter may give them entry to secret visible information.

Decide Leroy McCullough in King County, Washington wrote in a brand new ruling that AI tech used, “opaque strategies to signify what the AI mannequin ‘thinks’ must be proven,” in response to a brand new report from NBC News Tuesday. And that’s a refreshing little bit of readability about what’s taking place with these AI instruments in a world of AI hype.

“This Courtroom finds that admission of this Al-enhanced proof would lead to a confusion of the problems and a muddling of eyewitness testimony, and will result in a time-consuming trial inside a trial in regards to the non-peer-reviewable-process utilized by the AI mannequin,” McCullough wrote.

The case includes Joshua Puloka, a 46-year-old accused of killing three folks and wounding two others at a bar simply exterior Seattle in 2021. Attorneys for Puloka needed to introduce cellphone video captured by a bystander that’s been AI-enhanced, although it’s not clear what they imagine may very well be gleaned from the altered footage.

Puloka’s legal professionals reportedly used an “skilled” in inventive video manufacturing who’d by no means labored on a legal case earlier than to “improve” the video. The AI device this unnamed skilled used was developed by Texas-based Topaz Labs, which is obtainable to anybody with an web connection.

The introduction of AI-powered imaging instruments lately has led to widespread misunderstandings about what’s doable with this know-how. Many individuals imagine that operating a photograph or video by way of AI upscalers may give you a greater thought of the visible data that’s already there. However, in actuality, the AI software program isn’t offering a clearer image of knowledge current within the picture—the software program is solely including data that wasn’t there within the first place.

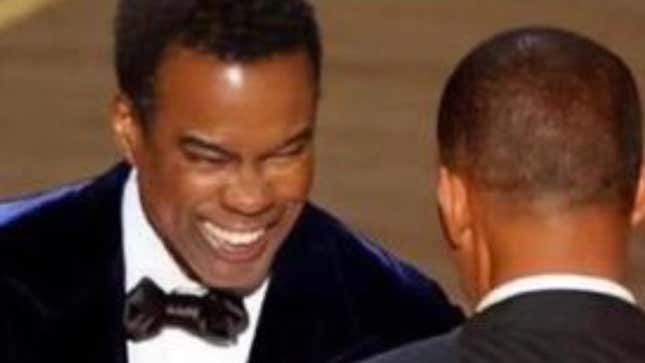

For instance, there was a widespread conspiracy principle that Chris Rock was carrying some sort of face pad when he was slapped by Will Smith on the Academy Awards in 2022. The speculation began as a result of folks began operating screenshots of the slap by way of picture upscalers, believing they may get a greater take a look at what was happening.

However that’s not what occurs whenever you run photos by way of AI enhancement. The pc program is simply including extra data in an effort to make the picture sharper, which might usually distort what’s actually there. Utilizing the slider under, you possibly can see the pixelated picture that went viral earlier than folks began feeding it by way of AI applications and “found” issues that merely weren’t there within the authentic broadcast.

Numerous high-resolution images and video from the incident present conclusively that Rock didn’t have a pad on his face. However that didn’t cease folks from believing they may see one thing hidden in plain sight by “upscaling” the picture to “8K.”

The rise of merchandise labeled as AI has created a whole lot of confusion among the many common individual about what these instruments can actually accomplish. Giant language fashions like ChatGPT have satisfied in any other case clever people who these chatbots are able to advanced reasoning when that’s merely not what’s taking place below the hood. LLMs are basically simply predicting the following phrase it ought to spit out to sound like a believable human. However as a result of they do a reasonably good job of sounding like people, many customers imagine they’re doing one thing extra refined than a magic trick.

And that looks as if the truth we’re going to reside with so long as billions of dollars are getting poured into AI companies. Loads of individuals who ought to know higher imagine there’s one thing profound taking place backstage and are fast accountable “bias” and guardrails being too strict. However whenever you dig slightly deeper you uncover these so-called hallucinations aren’t some mysterious pressure enacted by people who find themselves too woke, or no matter. They’re merely a product of this AI tech not being excellent at its job.

Fortunately, a decide in Washington has acknowledged this tech isn’t able to offering a greater image. Although we don’t doubt there are many judges across the U.S. who’ve purchased into the AI hype and don’t perceive the implications. It’s solely a matter of time earlier than we get an AI-enhanced video utilized in court docket that doesn’t present something however visible data added nicely after the very fact.